How Load Both Halves Of M128i Register With 64 Bit Integers

Vector Instructions. Role I

Vector computations are computations where instead of ane operation, multiple operations of the same type are performed on several pieces of data at once when a single processor instruction is executed. This principle is also known as SIMD (Single Didactics, Multiple Data). The name arose from an obvious similarity with vector algebra: operations betwixt vectors have single-symbol designations just involve performing multiple arithmetic operations on the components of the vectors.

Originally, vector computations were performed past specialized coprocessors that used to be a major component of supercomputers. In the 1990s, some x86 CPUs and several processors of other architectures were equipped with vector extensions, which are special big-size registers, and the vector instructions to operate them.

Vector instructions are used where there is a need to execute multiple operations of the same type and achieve loftier computation performance, such every bit various applications in computational mathematics, mathematical modeling, computer graphics, and computer games. Without vector computations, information technology is now impossible to achieve the computing performance needed for video betoken processing, especially video coding and decoding. Note that in some applications and algorithms, vector instructions do non increase performance.

This article shows examples of using vector instructions and implementing several algorithms and functions that employ them. These examples are mainly taken from the prototype and signal processing fields but can also be useful to software developers working in other areas of application. Vector instructions help increase performance, just without guaranteed success: to fully realize the potential offered by the computer, the programmer needs to exist not only careful and precise, but often as well inventive.

Instructions and registers

Vector computations are computations where multiple operations of the aforementioned type are performed at once when a unmarried processor instruction is executed. This principle is now implemented not only in specialized processors, but also in x86 and ARM CPUs in the form of vector extensions, which are special vector registers that are extra wide compared to general-purpose ones. To piece of work with these, special vector instructions are provided that extend the instruction set of the processor.

Normally, vector instructions implement the same operations as scalar, or (regular) instructions (come across Fig. 1) but achieve a higher performance due to the higher volume of information they process. A general-purpose register is expected to concord a single information item of a specific blazon (e.g. an integer of a certain length or a float) when an instruction is executed, while a vector register simultaneously holds equally many independent data items of the relevant type as the register can accommodate. When a vector instruction is executed, the same number of independent operations can be performed at one time on this data, and the computation operation gets boosted by the same factor. Increasing processor performance past performing multiple identical operations at the same fourth dimension is the primary purpose of vector extensions.

In x86 CPUs, the first vector extension was the MMX pedagogy fix that used eight 64-bit registers, MM0–MM7. MMX gave mode to the more than powerful 128-bit SSE float and SSE2 integer and double-precision float instructions that used the XMM0–XMM7 registers. Subsequently, 128-bit SSE3, SSSE3, SSE4.1, and SSE4.2 instruction sets came out that extended SSE and SSE2 with several useful instructions. Nigh instructions from the in a higher place sets use two register operands; the result is written into one of these registers, and its original content is lost.

The next milestone in the development of vector extensions was marked by the even more powerful 256-bit AVX and AVX2 instructions that utilise the 256-fleck YMM0–YMM15 registers. Notably, these instructions utilize iii register operands: two registers store the source data, while the third register receives the performance result, and the contents of the other 2 remain intact. The most contempo vector teaching prepare is AVX-512 which uses thirty-2 512-bit registers, ZMM0–ZMM31. AVX-512 is used in some server CPUs for high-performance computations.

With the proliferation of 64-bit CPUs, the MMX pedagogy set up was obsolete. Nonetheless, the SSE and SSE2 instructions did not fall into disuse with the advent of AVX and AVX2 and are yet actively used. x86 CPUs maintain reverse compatibility: if the CPU supports AVX2, it also supports SSE/SSE2, SSE3, SSSE3, SSE4.1, and SSE4.two. Similarly, if the CPU supports SSSE3, for example, it supports all the before instruction sets.

For ARM CPUs, the NEON vector extension was developed. These 64- and 128-bit vector instructions use 30-two 64-bit registers or 16 128-bit ones (ARM64 has thirty-two 128-bit registers).

Since vector instructions are tied to a specific processor compages (and often even to a specific processor), programs that use these instructions go non-portable. Therefore, multiple implementations of the same algorithm using different instruction sets become necessary to achieve portability.

Intrinsics

How can a programmer use vector instructions? First of all, they can exist used in assembler lawmaking.

It is as well possible to access vector instructions from a program written in a high-level language, including C/C++, without using inline assembler code. To that end, the so-called intrinsics are used, which are embedded compiler objects. Ane or multiple data types are declared in the header file, and a variable of one of those types corresponds to a vector register. (From the programming point of view, this is a special kind of fixed length array that does not allow access to the individual array elements.) The header file too declares functions that have arguments and return values of the above types and perform the aforementioned operations on information from the programming perspective every bit the corresponding vector operations. In reality, these functions are not implemented in software: instead, the compiler replaces each phone call to them with a vector instruction when generating object code. Intrinsics thus allow a plan to exist written in a high-level linguistic communication with a operation close or equal to that of an assembler program.

All that is needed to use intrinsics is to include the corresponding header file and when some compilers are used respective compiler options should be enabled. Although they are non office of C/C++ language standards, intrinsics are supported by the mainstream compilers such as GCC, Clang, MSVC, Intel.

They also help streamline the processing of various data types. Note that the processor, at least when it comes to the x86 CPU compages, does non have access to the blazon of data stored in a register. When a vector instruction is executed, its information is interpreted as having a specific type associated with that pedagogy, such as float or integer of a certain size (signed or unsigned). It is the developer's responsibleness to ensure the validity of the computations, which requires considerable intendance, particularly as the data type tin can sometimes alter: for instance, with integer multiplication, the size of the production is equal to the combined size of the multipliers. Intrinsics tin can ease the task somewhat.

Thus, the XMM vector registers (SSE) have three associated data types [1]:

__m128, an "array" of four single-precision floats

__m128d, an "assortment" of ii double-precision floats

__m128i, a 128-bit register that can be considered an "array" of 8-, 16-, 32-, or 64-bit numbers. Since a specific vector education works typically with only one of the three data types (single-precision float, double-precision bladder, or integer), the arguments of the functions representing vector instructions likewise have ane of the in a higher place 3 types. The AVX2 blazon system has a similar design: it provides the types __m256 (float), __m256d (double), and __m256i (integer).

The NEON intrinsics implement an even more avant-garde blazon system [two] where a 128-bit register is associated with the types int32x4_t, int16x8_t, int8x16_t, float32x4_t, and float64x2_t. NEON also provides multi-annals data types, such equally int8x16x2_t. In this kind of system, the specific blazon and size of annals contents are known at all times, so at that place is less room for error when blazon conversion occurs and the data size changes.

Consider an example of a elementary office implemented using the SSE2 educational activity gear up.

- // 1.2.one: Example of SSE2 intrinsics

- // for int32_t

- #include <stdint.h>

- // for SSE2 intrinsics

- #include <emmintrin.h>

- void bar(void)

- {

- int32_t array_a[4] = {0,ii,1,2}; // 128 chip

- int32_t array_b[4] = {8,5,0,vi};

- int32_t array_c[4];

- __m128i a,b,c;

- a = _mm_loadu_si128((__m128i*)array_a); // loading array_a into annals a

- b = _mm_loadu_si128((__m128i*)array_b);

- c = _mm_add_epi32(a, b); // must be { 8,7,1,8 }

- _mm_storeu_si128((__m128i*)array_c, c);

- }

In this example, the contents of array_a is loaded into one vector register and the contents of array_b into another. The corresponding 32-chip register elements are and then added together, and the outcome is written into a third annals and finally copied to array_c. This example highlights another notable feature of intrinsics. While _mm_add_epi32 takes ii register arguments and returns i annals value, the paddd educational activity corresponding to _mm_add_epi32 has simply two actual register operands, one of which receives the operation event and therefore loses its original contents. To preserve the annals contents when compiling the "c = _mm_add_epi32(a, b)" expression, the compiler adds operations that copy the data between registers.

The names of intrinsics are called and so every bit to improve source code readability. In the x86 compages, a name consists of three parts: a prefix, an functioning designation, and a scalar data type suffix (Fig. two, а). The prefix indicates the vector annals size: _mm_ for 128 bits, _mm256_ for 256 $.25, and _mm512_ for 512 $.25. Some data type designations are listed in Table 1. The NEON intrinsics in ARM have a similar naming pattern (Fig. 2, b). Call up that at that place are two types of vector registers (64- and 128-fleck). The letter q indicates that the instruction is for 128-fleck registers.

The names of the intrinsic data types (__m128i and others) and functions have become a de facto standard in different compilers. In the residual of this text, vector instructions volition exist referred to by their intrinsic names rather than mnemonic codes.

Essential vector instructions

This section describes the essential educational activity classes. Information technology lists examples of oftentimes used and helpful instructions — mainly from the x86 architecture but besides from ARM NEON.

Information exchange with RAM

Earlier the processor can practise anything with information residing in the RAM, the data first has to exist loaded into a processor register. Then, after the processing, the data needs to be written back into the RAM.

Most vector instructions are annals-to-register — that is, their operands are vector registers, and the result gets written into the same registers. At that place is a range of specialized instructions for information exchange with RAM.

The _mm_loadu_si128(__m128i* addr) educational activity retrieves a 128-bit long continuous array of integers with a start address of addr from the RAM and writes it into the selected vector register. In contrast, the _mm_storeu_si128(__m128i* addr, __m128i a) instruction copies a 128-bit continuous array of information from the annals a into the RAM, starting from the accost addr. The address used with these instructions, addr, tin can be arbitrary (but, of form, should not cause assortment overruns on read and write). The _mm_load_si128 and _mm_store_si128 instructions are similar to the above ones and potentially more efficient simply require that addr be a multiple of 16 bytes (in other words, aligned to a 16-byte boundary); otherwise, a hardware exception will be thrown when executing them.

Specialized instructions exist for reading and writing single- and double-precision floating point data (128-fleck long), namely _mm_loadu_ps/_mm_storeu_ps and _mm_loadu_pd/_mm_storeu_pd.

A frequent need is to load less data than the vector register accommodates. To that terminate, the _mm_loadl_epi64(__m128i* addr) didactics retrieves a continuous 64-chip array with the start accost of addr from the RAM and writes information technology into the to the lowest degree significant half of the selected vector register, setting the bits of the most significant half to zeros. The _mm_storel_epi64(__m128i* addr, __m128i a) educational activity, which has the reverse upshot, copies the least significant 64 bits of the annals into the RAM, starting from the accost addr.

The _mm_cvtsi32_si128(int32_t a) instruction copies a 32-bit integer variable into the least pregnant 32 bits of the vector register, setting the residuum to zeros. The _mm_cvtsi128_si32(__m128i a) pedagogy works in the opposite direction past copying the to the lowest degree significant 32 bits of the register into an integer variable.

Logical and comparison operations

The SSE2 didactics set provides instructions that perform the post-obit logical operations: AND, OR, XOR, and NAND. The corresponding instructions are named _mm_and_si128, _mm_or_si128, _mm_xor_si128, and _mm_andnot_si128. These instructions are fully analogous to the corresponding integer bitwise operations, with the difference being that the information size is 128 bits instead of 32 or 64.

The often used _mm_setzero_si128() instruction that sets all the bits of the target register to zeros is implemented using the XOR performance where the same register is used for both operands.

Logical instructions are closely related to comparison instructions. These compare the corresponding elements of two source registers and cheque if a specific condition (equality or inequality) is satisfied. If the status is satisfied, all the $.25 of the chemical element in the target register are set to ones; otherwise, they are set up to zeros. For case, the _mm_cmpeq_epi32(__m128i a, __m128i b) instruction checks if the 32-bit elements of the registers a and b are equal to each other. The results of several different condition checks can be combined using logical instructions.

Arithmetic and shifting operations

This grouping of instructions is, without doubt, the most commonly used.

For floating-point calculations, both x86 and ARM accept instructions that implement all iv arithmetic operations and square root computation for unmarried- and double-precision numbers. The х86 architecture has the post-obit instructions for unmarried-precision numbers: _mm_add_ps, _mm_sub_ps, _mm_mul_ps, _mm_div_ps, and _mm_srqt_ps.

Permit u.s. consider a simple instance of floating-signal arithmetics operations. Like in the example from Department 2 (1.2.1), the elements of ii arrays, src0 and src1, are summed here, and the outcome is written into the array dst. The number of elements to exist summed is specified in the parameter len. If len is not a multiple of the number of elements that the vector annals accommodates (in this case, four and 2), the rest of the elements are processed conventionally, without vectorization.

- // 1.iii.i Sum of elements of two arrays

- /* necessary for SSE and SSE2 */

- void sum_float( float src0[], float src1[], bladder dst[], size_t len)

- __m128 x0, x1; // floating-point, unmarried precision

- size_t len4 = len & ~0x03;

- for(size_t i = 0; i < len4; i+=4)

- x0 = _mm_loadu_ps(src0 + i); // loading of four bladder values

- x1 = _mm_loadu_ps(src1 + i);

- x0 = _mm_add_ps(x0,x1);

- _mm_storeu_ps(dst + i, x0);

- for(size_t i = len4; i < len; i++)

- dst[i] = src0[i] + src1[i];

- }

- void sum_double( double src0[], double src1[], double dst[], sizе_t len)

- __m128d x0, x1; // floating-signal, double precision

- size_t len2 = len & ~0x01;

- for(size_t i = 0; i < len2; i+=ii)

- x0 = _mm_loadu_pd(src0 + i ); //loading of two double values

- x1 = _mm_loadu_pd(src1 + i );

- x0 = _mm_add_pd(x0,x1);

- _mm_storeu_pd(dst + i, x0);

- if(len2 != len)

- dst[len2] = src0[len2] + src1[len2];

- }

For a specific integer arithmetic operation, there are unremarkably several instructions of the same type, each tailored to data of a specific size. Consider addition and subtraction. For 16-chip signed integers, the _mm_add_epi16 didactics performs addition and the _mm_sub_epi16 education performs subtraction. Like instructions exist for 8-, 32-, and 64-bit integers. The aforementioned goes for the left and right logical shift that is implemented for the data sizes of 16, 32, and 64 bits (_mm_slli_epi16 and _mm_srli_epi16, respectively, in the case of 16 $.25). Still, the right arithmetic shift is implemented only for 16- and 32-scrap information sizes: this operation is performed past the _mm_srai_epi16 and _mm_srai_epi32 instructions. ARM NEON as well provides instructions for these operations, spanning the information sizes of eight, 16, 32, and 64 $.25, both signed and unsigned.

The _mm_slli_si128(__m128i a, int imm) and _mm_srli_si128(__m128i a, int imm) instructions care for the register contents as a 128-fleck number and shift it by imm bytes (non bits!) to the left and right, respectively.

The SSE3 and SSSE3 instruction sets introduce instructions for horizontal addition (Fig. 3): _mm_hadd_ps(__m128 a, __m128 b), _mm_hadd_pd(__m128d a, __m128d b), _mm_hadd_epi16(__m128i a, __m128i b), and _mm_hadd_epi32(__m128i a, __m128i b). With horizontal addition, the adjacent elements of the same register are added together. Horizontal subtraction instructions are also provided (_mm_hsub_ps etc.) that subtract numbers in the aforementioned way. Similar instructions implementing pairwise addition (e.g. vpaddq_s16(int16x8_t a, int16x8_t b)) exist among the ARM NEON instructions.

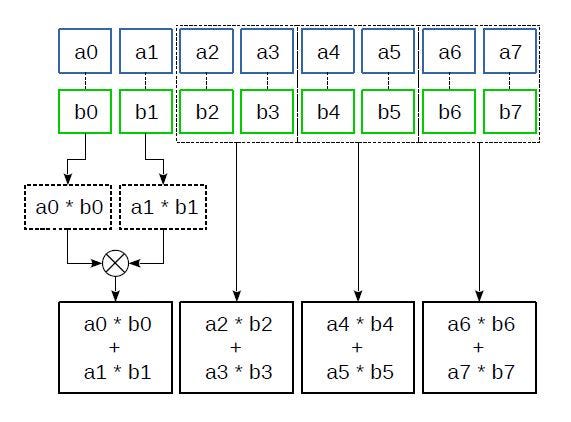

Mostly, with integer multiplication, the bit depth of the product is equal to sum of bit depths of multipliers. Thus, multiplying 16-fleck elements of one register by the corresponding elements of another will, in the general instance, yield 32-bit products that will require two registers instead of one to suit.

The _mm_mullo_epi16(__m128i a, __m128i b) instruction multiplies 16-bit elements of the registers a and b, writing the least significant sixteen bits of the 32-bit product into the target register. Its analogue _mm_mulhi_epi16(__m128i a, __m128i b) writes the most significant 16 $.25 of the product into the target register. The results produced by these instructions tin exist combined into 32-bit products using the _mm_unpacklo_epi16 and _mm_unpackhi_epi16 instructions that we will discuss below. Of class, if the multipliers are minor enough, _mm_mullo_epi16 lone will suffice.

The _mm_madd_epi16(__m128i a, __m128i b) instruction multiplies 16-scrap elements of the registers a and b so adds together the resulting adjacent 32-bit products (Fig. iv). This instruction has proved especially useful for implementing various filters, discrete cosine transforms, and other transforms where many combined multiplications and additions are needed: it converts the products into the convenient 32-bit format right away and reduces the number of additions required.

ARM NEON has quite a various ready of multiplication instructions. For example, it provides instructions that increase the product size (like vmull_s16) and those that do not, and it has instructions that multiply a vector past a scalar (such as vmul_n_f32). In that location is no instruction similar to _mm_madd_epi16 in NEON; instead, multiply-and-accumulate instructions are provided that work co-ordinate to the formula ai=ai+(bici), i=i..n . Instructions like this also exist in the x86 architecture (the FMA didactics set), but only for floating-point numbers.

As for integer vector sectionalisation, information technology is not implemented on x86 or ARM.

Permutation and Interleaving

The processor instructions of the blazon discussed below do not accept scalar counterparts. When they are executed, no new values are produced. Instead, either the information is permuted inside the register, or the data from several source registers is written into the target register in a specific gild. These instructions exercise not look very useful at first glance but are, in fact, extremely important. Many algorithms cannot be implemented efficiently without them.

Several x86 and ARM vector instructions implement copying past mask (Fig. 5). Consider having a source assortment, a target array, and an index array identical in size to the target, where each element in the index array corresponds to a target array chemical element. The value of an index assortment element points to the source array element that is to be copied to the corresponding target array chemical element. By specifying unlike indexes, all kinds of element permutations and duplications can exist implemented.

Vector instructions employ vector registers or their combinations as the source and target arrays. The alphabetize array can be a vector annals or an integer constant with chip groups corresponding to the target register elements and coding the source annals elements.

Ane of the instructions that implement copying by mask is the SSE2 _mm_shuffle_epi32(__m128i a, const int im) instruction that copies the selected 32-bit elements of the source annals into the target register. The index array is the second operand, an integer constant that specifies the copy mask. This instruction is typically used with the standard macro _MM_SHUFFLE that offers a more than intuitive way to specify the re-create mask. For example, when executing

a = _mm_shuffle_epi32(b,_MM_SHUFFLE(0,one,2,3));

the 32-flake elements of b are written into the a annals in contrary order. And when executing

a = _mm_shuffle_epi32(b,_MM_SHUFFLE(ii,ii,2,two));

the same value, namely the third chemical element of the b register, is written into all elements of the a register.

The _mm_shufflelo_epi16 and _mm_shufflehi_epi16 instructions piece of work in a like fashion, but copy the selected xvi-bit elements from the least significant and, respectively, the most significant half of the register and write the other half into the target register as is. As an example, we will show how these instructions tin can be used forth with _mm_shuffle_epi32 to arrange the sixteen-flake elements of a 128-fleck register in the reverse order in only 3 operations. Here is how it is done:

// a: a0 a1 a2 a3 a4 a5 a6 a7

a = _mm_shuffle_epi32(a, _MM_SHUFFLE(one,0,three,2)); // a4 a5 a6 a7 a0 a1 a2 a3

a = _mm_shufflelo_epi16(a, _MM_SHUFFLE(0,1,2,3)); // a7 a6 a5 a4 a0 a1 a2 a3

a = _mm_shufflehi_epi16(a, _MM_SHUFFLE(0,ane,2,3)); // a7 a6 a5 a4 a3 a2 a1 a0

Get-go, the most and least pregnant halves of the register are swapped, and then the xvi-chip elements of each half are bundled in the opposite order.

The _mm_shuffle_epi8(__m128i a, __m128i i) instruction from the SSSE3 set also performs copying past mask just operates in bytes. (However, this pedagogy uses the same register as the source and target, so it is more than of a "permutation by mask".) The indices are specified by the byte values in the 2d annals operand. This instruction allows much more diverse permutations than the instructions discussed earlier, making information technology possible to simplify and speed up the computations in many cases. Thus, the entire above instance can be reimplemented as a single pedagogy:

a = _mm_shuffle_epi8(a, i);For this, the bytes of the i register should take the post-obit values (starting from the least significant byte): 4,15,12,13,10,11,8,9,half-dozen,7,4,5,two,3,0,1

In ARM NEON, copying by mask is implemented using several instructions that piece of work with i source annals (e.thousand., vtbl1_s8 (int8x8_t a, int8x8_t idx)) or a grouping of registers (east.yard., vtbl4_u8(uint8x8x4_t a, uint8x8_t idx)). The vqtbl1q_u8(uint8x16_t t, uint8x16_t idx) pedagogy is similar to _mm_shuffle_epi8.

Another operation implemented using vector instructions is interleaving. Consider the following arrays: assortment A with the elements a0,a1,…,an, B with the elements b0,b1,…,bn, … and Z with the elements z0,z1,…,zn. When shuffled, the elements of these arrays are combined into 1 array in the following order: a0,b0…,z0,a1,b1,…,z1,…,an,bn,…,zn (Fig. 6). The corresponding vector instructions also utilize registers — only two of them — equally the source arrays. Obviously, as this performance does not change the size of information, there should also be two target registers.

Vector instructions on x86 can have only one target annals, therefore the shuffling instructions process only half of the input data. Thus, _mm_unpacklo_epi16(__m128i a, __m128i b) shuffles the sixteen-bit elements of the to the lowest degree significant halves of the a and b registers, and its _mm_unpackhi_epi16(__m128i a, __m128i b) counterpart does the aforementioned with the most significant halves. 8-, 32-, and 64-bit instructions work similarly. The _mm_unpacklo_epi64 and _mm_unpackhi_epi64 instructions substantially combine the least and, respectively, about significant 64 bits of the 2 registers. Paired instructions are oftentimes used together.

Like instructions exist in ARM NEON (the VZIP teaching family unit). Some of them use two target registers instead of ane and thus procedure the entirety of the input data. There are as well instructions that piece of work in opposite (VUZP), for which there are no equivalents on x86.

The _mm_alignr_epi8(__m128i a, _m128i b, int imm) education copies the bytes of the source annals a into the target annals, starting from the selected byte imm, and copies the rest from the register b, starting from the least meaning byte. Let the bytes of the a register have the values a0..a15, and the bytes of the b register have the values b0..b15. Then, when executing

a = _mm_alignr_epi8(a, b, v);

the following bytes volition be written into the a annals: a5, a6, a7, a8, a9, a10, a11, a12, a13, a14, a15, b0, b1, b2, b3, b4. ARM NEON provides instructions of this kind that work with elements of specific size instead of bytes [iii].

AVX and AXV2 instructions

The farther development of x86 vector instructions is marked by the advent of 256-bit AVX and AVX2 instructions. What do these instructions offer to developers?

First of all, instead of eight (or sixteen) 128-bit XMM registers, there are sixteen 256-bit registers, YMM0–YMM15, in which the least meaning 128 bits are the XMM vector registers. Unlike SSE, these instructions take 3, not two register operands: two source registers and one target register. The contents of the source registers are not lost after executing an didactics.

Well-nigh all operations implemented in the before SSE–SSE4.2 instruction sets are nowadays in AVX/AVX2, most importantly the arithmetic ones. At that place are instructions that are fully analogous to _mm_add_epi32, _mm_madd_epi16, _mm_sub_ps, _mm_slli_epi16 and many others, but work twice as fast.

AVX/AVX2 have no exact equivalents for _mm_loadl_epi64 and _mm_cvtsi32_si128 (and the corresponding output instructions). Instead, the _mm256_maskload_epi32 and _mm256_maskload_epi64 instructions are introduced that load the required number of 32- and 64-fleck values from the RAM using a fleck mask.

New instructions such every bit _mm256_gather_epi32, _mm256_gather_epi64, and their floating-signal equivalents take been added that load data in blocks using the starting time address and block offsets equally opposed to a continuous array. These are especially useful when the desired data is not stored contiguously in the RAM, and many operations are needed to think and combine it.

AVX2 has data interleaving and permutation instructions, such as _mm256_shuffle_epi32 and _mm256_alignr_epi8. They have a unique belongings that differentiates them from the residuum of the AVX/AVX2 instructions. For example, the regular arithmetic instructions treat the YMM register as a 256-bit assortment. In contrast, these instructions treat YMM as 2 128-flake registers and perform operations on them in exactly the same manner as the corresponding SSE instruction.

Consider a register with the following 32-fleck elements: A0, A1, A2, A3, A4, A5, A6, A7. So, later executing

a = _mm256_shuffle_epi32(a, _M_SHUFFLE(0,1,2,3));

the annals contents change to A3, A2, A1, A0, A7, A6, A5, A4.

Other instructions, such as _mm256_unpacklo_epi16, _mm256_shuffle_epi8, and _mm256_alignr_epi8, work in a similar mode.

New permutation and interleaving instructions accept also been added in AVX2. For instance, _mm256_permute4x64_epi64(__m256i, int imm) permutes 64-bit register elements similarly to how _mm_shuffle_epi32 permutes 32-bit elements.

Where exercise I go information on vector instructions?

First, visit the official websites of microprocessor vendors. Intel has an online reference where yous tin detect a comprehensive clarification of intrinsics from all instruction sets. A similar reference exists for ARM CPUs.

If you would like to larn about the practical utilise of vector instructions, refer to the free-software implementations of audio and video codecs. Projects such as FFmpeg, VP9, and OpenHEVC use vector instructions, and the source code of these projects provides examples of their use.

To read near the sources of this commodity see the full version.

Author:

Dmitry Farafonov. An Elecard engineer. He has been working with optimization of sound and video codecs, likewise as programs for processing audio and video signals since 2015.

Source: https://medium.com/@videocompressionguru/vector-instructions-part-i-343723b103f

Posted by: osbornesteaking.blogspot.com

0 Response to "How Load Both Halves Of M128i Register With 64 Bit Integers"

Post a Comment